Cecile Janssens, co-developer of the citation-based search tool CoCites, discusses how such a tool can rapidly and accurately identify relevant scientific articles based on citation and co-citation frequency. Janssens also elaborates on how CoCites can improve its efficiency, intuitiveness, accountability, and reproducibility.

When you search ‘KL622’ in Google, you get the travel details of the upcoming KLM flight from Atlanta to Amsterdam, the scheduled time and departure terminal. Google knows that people who enter a flight number want flight information and assumes you want that too.

When researchers search ‘TCF7L2’ in Google Scholar, Google knows it’s the name of a gene and that it plays a role in diabetes but has no clue what they are looking for. Not even when they search ‘TCF7L2’ AND ‘diabetes’ AND ‘risk’. Researchers seek articles on niche topics and they need to spell out what they want. At least, if they don’t want to scroll through a long list of irrelevant articles first.

A good search finds relevant articles and, preferably, not too much more. Yet, making keyword search queries that are effective and efficient requires expertise that most researchers don’t have.

Tracking citations

A simple and obvious alternative is to track the citations of known relevant articles. Tracking citations makes sense as researchers cite articles relevant to their topic, but it is notoriously inefficient. Until now. New tools track the citations and co-citations, or the frequency in which two documents are cited together by other documents, of multiple articles at once and rank their results.

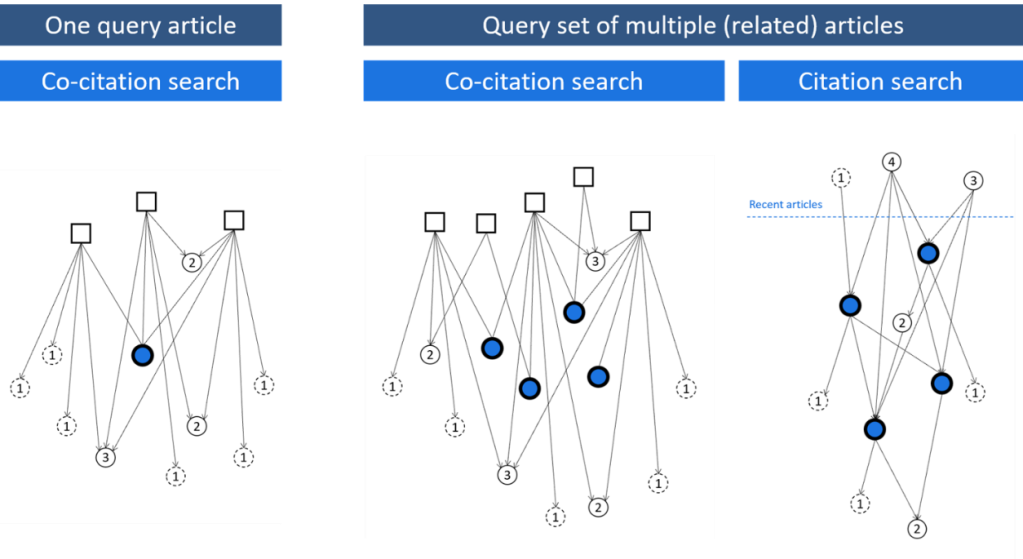

CoCites, a tool we developed several years ago, performs simple co-citation and citation searches (see figure below and here for a CoCites tutorial). The co-citation search identifies articles that are cited together with one or more query articles and ranks them in descending order of their co-citation frequency. The citation search finds articles that cite or are cited by the query articles.

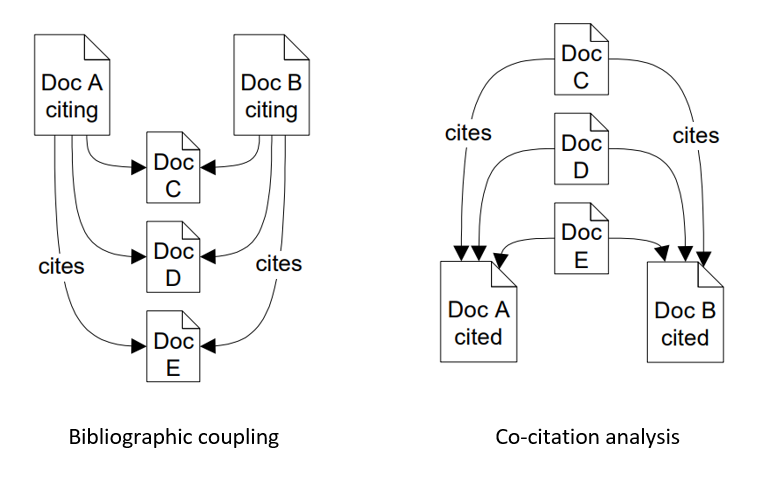

The principles behind the citation tracking are decades old in scientometric research. In 1973, Henry Small predicted that co-citations reflect the similarity between the articles [1], and a decade earlier, Kessler had argued the same for bibliographic coupling, the overlap of reference lists that is behind the citation search [2]. The citation count is the overlap of an article’s reference list and the query set, which can be seen as the reference list of a fictional paper. See Figure 2 for an illustration of bibliographic coupling and co-citation analysis.

Our research group studied the citation tool we developed internally using Web of Science (WoS) data and found that co-citation searching retrieved the key articles on a niche topic [4]. Using WoS data*, the tool missed articles that are infrequently cited, such as conference abstracts, articles in non-English, dissertations, and reports, but these articles were retrieved by the citation search when they cited two or more of the key articles from the query set.

“Our research group studied the citation tool and found that co-citation searching retrieved the key articles on a niche topic.”

The impressive findings of our small pilot study motivated us to build CoCites and to plan for a larger study. We had two questions. As the principles behind co-citation and citation searching are already decades old, we wanted to know how the ‘method’ of citation tracking can be further improved. And as the search results depend on the articles in the query set, we wanted to know when we can expect the method to find all the relevant articles on a niche topic.

Improving the search method

As the citation searches can be expanded in many ways, we first discussed what kind of method we wanted to develop. We came up with the following criteria. The method needed to be:

- Effective and efficient. The method should find relevant articles and not too much more.

- Intuitive. The method should make sense to the user. It should be understandable how the tool can find relevant literature so users know when they can depend on it. For that, the method should be parsimonious and elegant. In addition, any improvements to the method should retrieve articles not previously found with simpler versions and not merely deliver slightly different rankings of the same articles.

- Accountable. The method should be justifiable and explainable to colleagues. If a user writes in a paper that they used CoCites for their literature search, then the reader needs to understand from the description of the method that using CoCites was appropriate for the purpose of the study.

- Reproducible. The user’s search query should give the same results irrespective of who runs the query and what they have searched before.

With these criteria in mind, we considered the following changes for CoCites:

- Combine the co-citation and citation results in one ranking. The two searches can run simultaneously with their results presented in one ranking. We tried this but decided against it as the citation search appeared more effective when the query set is larger. Our study showed that it is recommended to run co-citation search first, select relevant results and add them to the query set, and then run the citation search.

- Rank on a different indicator. When query articles are highly cited, their top-ranked results are highly cited too, even if the articles are not relevant. These irrelevant results are removed by ranking the search results on the percentage of co-citations as part of the total citations. Unfortunately, this percentage promotes irrelevant ‘lowly cited’ articles to the top of the list. The percentage is however an excellent search filter. It reduces the number of articles in the search results without compromising the yield of relevant articles. [5]

- Citation context. The place of citations and their proximity to each other is proposed to improve the ranking of search results (e.g., [6], [7]). We did not consider adding the citation context as it is unknown if it helps to retrieve articles that would otherwise not be retrieved. It seems too much to ask from citation data. Researchers don’t cite the work of others carefully (see e.g.,[8]).

When can citation tracking be relied upon?

We found that the biggest improvement in the relevance of the search results was achieved by a more mindful application of the method. From our research [9], we concluded:

- The co-citation search finds the key articles on a niche topic if the query article has sufficient citations. When these key articles are added to the query set, an updated co-citation search helps retrieve other relevant articles.

- The citation search finds recent articles and others that don’t have enough citations. This search becomes more effective when the query set has a larger number of articles.

- The percentage of co-citations is an effective filter to reduce the number of articles in the search results.

- Relevant articles that are missed are lowly cited articles that themselves do not cite any relevant articles from the query set. All others are retrieved if the query set is sufficiently large.

Tracking citations is useful as an alternative or complementary method for keyword searches. It is ideal for review projects, such as systematic reviews, meta-analyses, and rapid reviews, and for other search projects where the goal is to find more articles on a niche topic, such as thesis projects of students. The citation search is ideal for updating reviews, as newer articles likely cite the review and/or one or more of the studies in it. And the co-citation search is handy for finding out if an article is the best known and widely cited on a niche topic, as the search finds the key papers on a topic.

“Tracking citations is useful as an alternative or complementary method for keyword searches. It is ideal for review projects, such as systematic reviews, meta-analyses, and rapid reviews.”

Citations connect articles on the same topic. The citations of a single article may be sloppy and inaccurate, but across articles they provide the similarity ranking that was proposed decades ago. This similarity is not based on the opinion of one researcher, but on the citation behavior of the entire field. Citation tracking is based on crowd-sourced expert knowledge.

*The study was based on data from Web of Science. The current version of CoCites uses data from the NIH Open Citations Collection.

About the Author

Cecile Janssens is research professor of epidemiology at the Rollins School of Public Health of Emory University. Her work focuses on the improvement of research methods, including literature retrieval. With her team she developed CoCites, a citation-based search tool for scientific literature.

References

- Small H: Co-citation in the scientific literature: A new measure of the relationship between two documents. Journal of the American Society for Information Science 1973, 24(4):265-269. https://doi.org/10.1002/asi.4630240406

- Kessler MM: Bibliographic coupling between scientific papers. American Documentation 1963, 14(1):10-25. https://doi.org/10.1002/asi.5090140103

- Gipp B, Beel J. Citation Proximity Analysis (CPA): A new approach for identifying related work based on co-citation analysis. Proceedings of the 12th International Conference on Scientometrics and Informetrics (ISSI’09), Reio de Janeiro, Brazil, 2009. https://www.issi-society.org/proceedings/issi_2009/ISSI2009-proc-vol2_Aug2009_batch1-paper-4.pdf

- Janssens AC, Gwinn M: Novel citation-based search method for scientific literature: application to meta-analyses. BMC Med Res Methodol 2015, 15:84. https://doi.org/10.1186/s12874-015-0077-z

- Janssens AC, Goodman M, Gwinn M. Two strategies for improving the efficiency of citation-based literature searches. (Manuscript in preparation)

- Eto M: Extended co-citation search: Graph-based document retrieval on a co-citation network containing citation context information. Information Processing & Management 2019, 56(6):102046. https://doi.org/10.1016/j.ipm.2019.05.007

- Hsiao TM, Chen KH: Yet another method for author co‐citation analysis: A new approach based on paragraph similarity. Proceedings of the Association for Information Science and Technology 2017, 54(1):170-178. https://doi.org/10.1002/pra2.2017.14505401019

- Penders B: Ten simple rules for responsible referencing. PLOS Computational Biology 2018, 14(4):e1006036. https://doi.org/10.1371/journal.pcbi.1006036

- Janssens A, Gwinn M, Brockman JE, Powell K, Goodman M: Novel citation-based search method for scientific literature: a validation study. BMC Med Res Methodol 2020, 20(1):25. https://doi.org/10.1186/s12874-020-0907-5

![]() Unless it states other wise, the content of the

Bibliomagician is licensed under a

Creative Commons Attribution 4.0 International License.

Unless it states other wise, the content of the

Bibliomagician is licensed under a

Creative Commons Attribution 4.0 International License.

One Reply to “”